a clueless start to node.js

node.js is a technology that has been on my “to-try” stack of technologies for quite a while now. There has been quite some fuzz out there recently regarding this framework, and as so far I wanted to have a closer look on what’s possible in JavaScript outside the browser, anyway, it seemed a good reason for dealing with something “new” just for the sake of it, even without immediately having any meaningful use cases at hand… Read on. 🙂

First contact

Getting started with node.js, on an Ubuntu GNU/Linux 11.04 machine, is not all too difficult: sudo apt-get install nodejs, as almost to be expected, will fetch and install the node.js package off the Ubuntu repositories, ending up with /usr/bin/node being available, so you’re ready to go. To get something done with it, there is a neat and lean really really simple ‘Hello World’ lookalike on the node.js web page which gets you started running “something” using node.js rather quickly. So far, so good. Feels a bit like working with Python scripts from a Unix shell / editor environment. And it works. Now for some more.

Environment, I: Tooling

While I tend to use NetBeans and Eclipse (in that order) for my Java (EE) development tasks, I somehow hesitated using a fully-blown IDE for working with node.js / JavaScript code. Though there is some tooling for Eclipse as well as a NetBeans plugin for node.js integration, all along with the JavaScript debugger in this IDE, it somehow just felt too large for this kind of work.

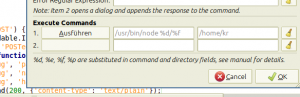

And as I am continuously using Geany as a text editor / development tool in situations in which things should be a little more light-weight, this was my way to go here as well. For most of the common programming and markup languages Geany does provide basic editor functionality (syntax highlighting, code folding, structural outline, very basic code completion) out of the box, just enough for my needs in this situation. Adding to this, it also provides a bunch of plugins, like GeanyVC that integrates GIT, SVN, CVS and the like. Only customization I had to do was to make the editor run .js files with node.js as soon as pressing the F5 key, which is the default way of running things in Geany. This is achieved setting the right executable and argument in “Build” -> “Set Build Commands” like this:

After that, pressing F5 while editing a .js file will make a terminal pop up, showing evidence that node.js is actually running your script. So far, so good.

Environment, II: npm

So, again trying to move forth fast, the first obvious thing to do was to copy-and-paste some sample code off some tutorial page into an empty .js file, hit F5 – and see a wagonload of error messages as node.js complained about a set of modules obviously being required yet not available. Fail. Looking into things a little deeper, I quickly learnt about npm, the “node package manager” and, akin to package managers in most of the current Linux distributions, being there for installing node.js modules (and dependencies of those). But before getting started with it, some updating needs to be done: According to the npm README file, node.js in a version > 0.4 is required for that.

Unfortunately, the version in Ubuntu GNU/Linux 11.04 is 0.2.8. Not nice. But help is there, as there is an Ubuntu PPA providing more up-to-date versions. Adding the PPA in recent Ubuntu versions is same as straightforward as, by then, updating the packages, and after that npm installs and works fine. By then, working with npm is simple if being used to any of the Linux package managers, and there also is a browseable repository to see which things are availble for you to install and use.

Some code, some modules: formidable

To, then again, play with node.js, I tried to look for (and, hopefully, solve) a problem I am dealing with, once in a while, in the Java EE world as well: Uploading large files. Something I consider to be not all too spectacular, yet something which, much to my surprise, still causes pain at times if trying to do it “right” in platforms such as Eclipse RAP or Java Server Faces (though, talking about the latter technology, it has somewhat improved in recent versions of Java EE, and JAX-RS / Jersey also is pretty good here).

As usual, first approach is to browse the web, wiki pages, blogs to see whether there are resources and tutorials on the issue. And indeed, there are. Installed the formidable module using npm, again copy-and-paste’d the code, ran it. Fine. Quite lean code to handle a file upload, I’ve seen this much larger the last couple of years. And it’s next to fool-proof, my only question left unanswered (maybe I wasn’t careful enough looking at it) quickly was resolved browsing stackoverflow.com for a while.

Some code, some modules: cradle

So now for the next step: In our business application, all the files uploaded to the system are stored somewhere in a central file system using a random name whereas most of the essential information (date and time of upload, original file name, all along with some metadata automatically extracted from the file) are stored within an RDBMS backend. As I happened to spend time with CouchDB quite often lately, I decided to also try storing upload information in a local CouchDB instance from within the node.js service. A quick look made me stumble across cradle, a “high level, caching CouchDB client for node.js”. Installation, as you already might expect by now, was easy thanks to npm again. The module itself is again rather simple in its use so adding the required lines of code to my growing upload script wasn’t really difficult and worked out of the box.

Some code, some modules: winston

In the end, it left open one of my last real considerations, logging. Traditionally, a problem people attempted to resolve quite a couple of times in the Java (EE) world, looking at the presence of log4j, commons-logging, java.util.logging, slf4j and a bunch of other logger implementations and frameworks available to date. Searching the npm registry proves that this is not far from different in the node.js world. Eventually, after reading through a couple of articles, I came across winston, installed it, got it to run in no time again. Playing with things took some time, but overally, its usage is familiar if you’re familiar with overall logging implementations in Java at least.

Conclusion: Works. Rocks.

I attached the script this session resulted in, for the sake of completeness. It’s in no possible way beautiful, but it works, at least on my machines, and creating it in the end had two effects: (a) It was immensely insightful in what is possible with JavaScript outside the browser these days, it tought me quite a few basic things on how node.js works, how to make use of that for any future purposes. And (b) it was quite fun. Few situations in which I felt like hitting a wall due to framework issues, bulky infrastructure or long build processes. Few situations of waiting for things to complete, being rather fast in what I was doing. Feeling my machine running at decent speed (and temperature) without burning a hole through my desk having an IDE and an application server and an RDBMS running the same time.

In some ways working with node.js kinda reminded me of diving deeper into Perl, a language I have intensely worked with aeons ago, at the point in time at which I discovered (and learnt how to make use of) CPAN. Which, then again, is a platform we left behind, figuring out that Java (EE) provides a bunch of things which seemed reasonable and meaningful these days, and still are today – just talking about deployment and lifecycle models for applications, or about the JSR and the “usual” perception that there be some sort of (ideally vendor-independent) API or specification backed by a bunch of hopefully compliant implementations.

Still, this doesn’t change my experience: Working with node.js was very pleasant and quite fun. Travelling through the npm repository indeed felt like browsing an early version of CPAN (regarding the amount of packages it contains) built on top of nowadays standards in terms of design and usability. The documentation for both node.js itself and most of the modules was really good, given the early version numbers of most of these technologies. For what I tried, I ended up with a very straightforward and easy way of getting it done, allowing for going as deep into things as I needed it while still leaving it manageable, without having to deal with too many internal details not of relevance in this case. I am not sure yet to which extent I will make use of node.js in near future (at least I noticed it is in use in parts of the “homebrew” applications on my webOS cellphone). But I see it’s a quite powerful way of implementing HTTP services (or, more generic, network services), and so, in our general strategy aiming at building an open, extensible system built on top of loosely coupled, accessible services, it might end up in a role similar to CouchDB – a technology that slowly makes its way into productive use, not as another “one-size-fits-all” solution but as one of many small parts solving dedicated problems. I’m curious to see how this will work out. As a liner note all along this path: Sometimes I wonder whether the appearance of tools like node.js, CouchDB and friends, tools which seem to offer quite a bunch more “agility” or extensibility compared to in example Java EE or .NET. Do I get usable prototypes faster? Am I, this way, faster in building high quality software? Is the software built this way more robust as far as changes are concerned? What are your thoughts?

Links

- fileserver.js – the script outcome of this session

- node.js docs collection, more specific current API reference

- node.js@googlegroups

- A Simple Webservice In node.js: Tutorial on how to build a simple RESTful web service using node.js. Great read, and especially useful as it pointed my way across http-console – indeed, as the page writes: “HTTP has never been so much fun”.

- Part 1 and part 2 of a tutorial building an application backed by CouchDB, using HAML for markup templating – something still missing in my crude inline-html sample script. 😉